Understanding and evaluating your synthetic intelligence (AI) system’s predictions will be difficult. AI and machine studying (ML) classifiers are topic to limitations attributable to a wide range of components, together with idea or knowledge drift, edge circumstances, the pure uncertainty of ML coaching outcomes, and rising phenomena unaccounted for in coaching knowledge. Some of these components can result in bias in a classifier’s predictions, compromising selections made based mostly on these predictions.

The SEI has developed a new AI robustness (AIR) software to assist applications higher perceive and enhance their AI classifier efficiency. On this weblog publish, we clarify how the AIR software works, present an instance of its use, and invite you to work with us if you wish to use the AIR software in your group.

Challenges in Measuring Classifier Accuracy

There may be little doubt that AI and ML instruments are a few of the strongest instruments developed within the final a number of a long time. They’re revolutionizing trendy science and know-how within the fields of prediction, automation, cybersecurity, intelligence gathering, coaching and simulation, and object detection, to call just some. There may be duty that comes with this nice energy, nonetheless. As a group, we have to be aware of the idiosyncrasies and weaknesses related to these instruments and guarantee we’re taking these into consideration.

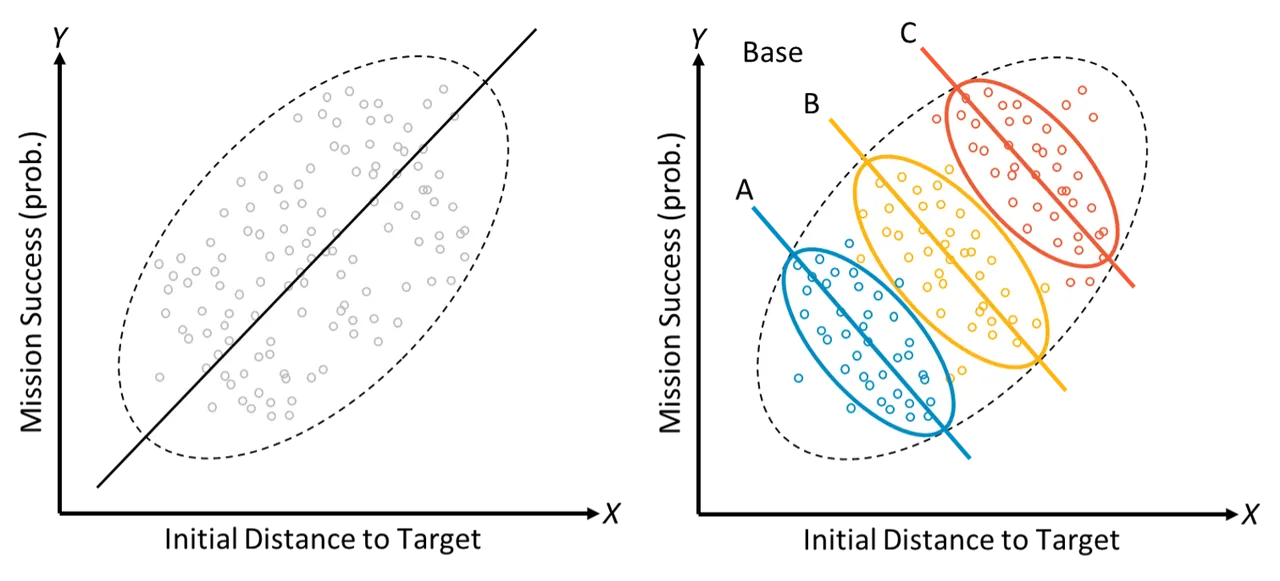

One of many best strengths of AI and ML is the power to successfully acknowledge and mannequin correlations (actual or imagined) inside the knowledge, resulting in modeling capabilities that in lots of areas excel at prediction past the strategies of classical statistics. Such heavy reliance on correlations inside the knowledge, nonetheless, can simply be undermined by knowledge or idea drift, evolving edge circumstances, and rising phenomena. This may result in fashions which will depart different explanations unexplored, fail to account for key drivers, and even doubtlessly attribute causes to the mistaken components. Determine 1 illustrates this: at first look (left) one may moderately conclude that the likelihood of mission success seems to extend as preliminary distance to the goal grows. Nonetheless, if one provides in a 3rd variable for base location (the coloured ovals on the best of Determine 1), the connection reverses as a result of base location is a standard reason for each success and distance. That is an instance of a statistical phenomenon generally known as Simpson’s Paradox, the place a pattern in teams of knowledge reverses or disappears after the teams are mixed. This instance is only one illustration of why it’s essential to know sources of bias in a single’s knowledge.

Determine 1: An illustration of Simpson’s Paradox

To be efficient in important drawback areas, classifiers additionally have to be sturdy: they want to have the ability to produce correct outcomes over time throughout a spread of situations. When classifiers turn into untrustworthy resulting from rising knowledge (new patterns or distributions within the knowledge that weren’t current within the unique coaching set) or idea drift (when the statistical properties of the end result variable change over time in unexpected methods), they could turn into much less seemingly for use, or worse, could misguide a important operational determination. Sometimes, to guage a classifier, one compares its predictions on a set of knowledge to its anticipated conduct (floor fact). For AI and ML classifiers, the info initially used to coach a classifier could also be insufficient to yield dependable future predictions resulting from adjustments in context, threats, the deployed system itself, and the situations into account. Thus, there isn’t any supply for dependable floor fact over time.

Additional, classifiers are sometimes unable to extrapolate reliably to knowledge they haven’t but seen as they encounter surprising or unfamiliar contexts that weren’t aligned with the coaching knowledge. As a easy instance, should you’re planning a flight mission from a base in a heat atmosphere however your coaching knowledge solely consists of cold-weather flights, predictions about gasoline necessities and system well being may not be correct. For these causes, it’s important to take causation into consideration. Realizing the causal construction of the info may help establish the varied complexities related to conventional AI and ML classifiers.

Causal Studying on the SEI

Causal studying is a discipline of statistics and ML that focuses on defining and estimating trigger and impact in a scientific, data-driven means, aiming to uncover the underlying mechanisms that generate the noticed outcomes. Whereas ML produces a mannequin that can be utilized for prediction from new knowledge, causal studying differs in its give attention to modeling, or discovering, the cause-effect relationships inferable from a dataset. It solutions questions reminiscent of:

- How did the info come to be the way in which it’s?

- What system or context attributes are driving which outcomes?

Causal studying helps us formally reply the query of “does X trigger Y, or is there another cause why they all the time appear to happen collectively?” For instance, let’s say we’ve these two variables, X and Y, which can be clearly correlated. People traditionally have a tendency to take a look at time-correlated occasions and assign causation. We’d cause: first X occurs, then Y occurs, so clearly X causes Y. However how will we check this formally? Till not too long ago, there was no formal methodology for testing causal questions like this. Causal studying permits us to construct causal diagrams, account for bias and confounders, and estimate the magnitude of impact even in unexplored situations.

Current SEI analysis has utilized causal studying to figuring out how sturdy AI and ML system predictions are within the face of circumstances and different edge circumstances which can be excessive relative to the coaching knowledge. The AIR software, constructed on the SEI’s physique of labor in informal studying, gives a brand new functionality to guage and enhance classifier efficiency that, with the assistance of our companions, will likely be able to be transitioned to the DoD group.

How the AIR Software Works

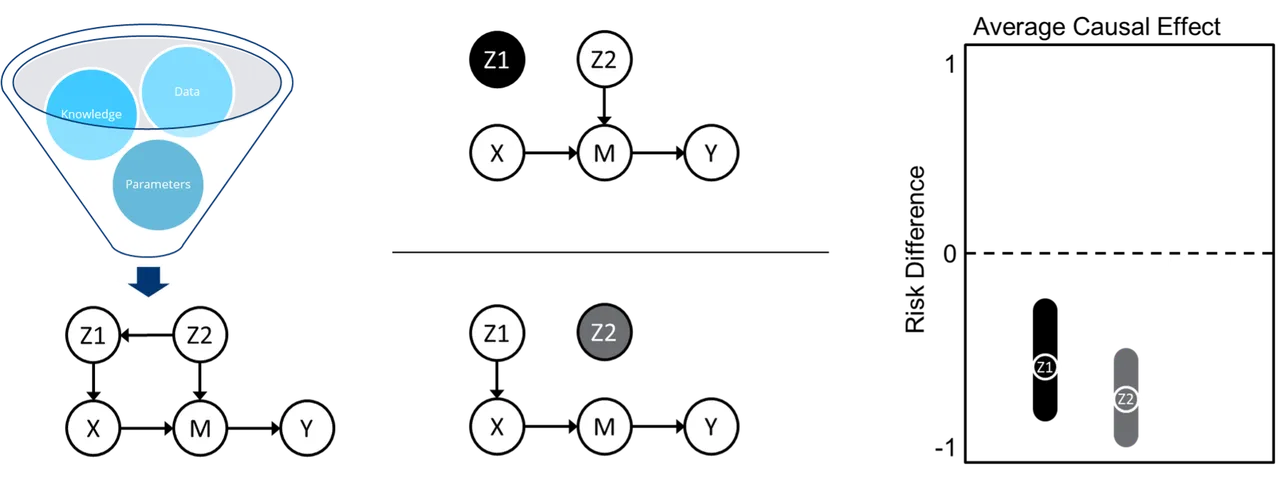

AIR is an end-to-end causal inference software that builds a causal graph of the info, performs graph manipulations to establish key sources of potential bias, and makes use of state-of-the-art ML algorithms to estimate the common causal impact of a situation on an final result, as illustrated in Determine 2. It does this by combining three disparate, and sometimes siloed, fields from inside the causal studying panorama: causal discovery for constructing causal graphs from knowledge, causal identification for figuring out potential sources of bias in a graph, and causal estimation for calculating causal results given a graph. Operating the AIR software requires minimal guide effort—a person uploads their knowledge, defines some tough causal information and assumptions (with some steerage), and selects acceptable variable definitions from a dropdown listing.

Determine 2: Steps within the AIR software

Causal discovery, on the left of Determine 2, takes inputs of knowledge, tough causal information and assumptions, and mannequin parameters and outputs a causal graph. For this, we make the most of a state-of-the-art causal discovery algorithm known as Greatest Order Rating Search (BOSS). The ensuing graph consists of a situation variable (X), an final result variable (Y), any intermediate variables (M), dad and mom of both X (Z1) or M (Z2), and the route of their causal relationship within the type of arrows.

Causal identification, in the midst of Determine 2, splits the graph into two separate adjustment units geared toward blocking backdoor paths by way of which bias will be launched. This goals to keep away from any spurious correlation between X and Y that is because of frequent causes of both X or M that may have an effect on Y. For instance, Z2 is proven right here to have an effect on each X (by way of Z1) and Y (by way of M). To account for bias, we have to break any correlations between these variables.

Lastly, causal estimation, illustrated on the best of Determine 2, makes use of an ML ensemble of doubly-robust estimators to calculate the impact of the situation variable on the end result and produce 95% confidence intervals related to every adjustment set from the causal identification step. Doubly-robust estimators enable us to provide constant outcomes even when the end result mannequin (what’s likelihood of an final result?) or the remedy mannequin (what’s the likelihood of getting this distribution of situation variables given the end result?) is specified incorrectly.

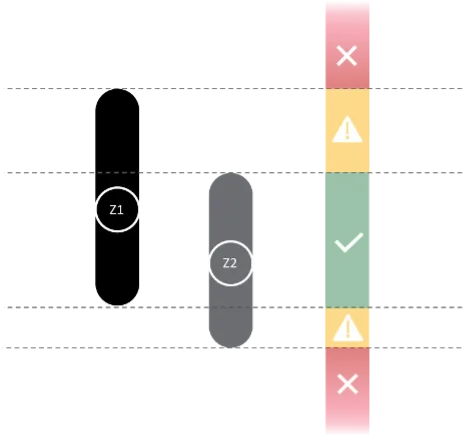

Determine 3: Decoding the AIR software’s outcomes

The 95% confidence intervals calculated by AIR present two unbiased checks on the conduct, or predicted final result, of the classifier on a situation of curiosity. Whereas it is likely to be an aberration if just one set of the 2 bands is violated, it could even be a warning to watch classifier efficiency for that situation repeatedly sooner or later. If each bands are violated, a person needs to be cautious of classifier predictions for that situation. Determine 3 illustrates an instance of two confidence interval bands.

The 2 adjustment units output from AIR present suggestions of what variables or options to give attention to for subsequent classifier retraining. Sooner or later, we’d prefer to make use of the causal graph along with the realized relationships to generate artificial coaching knowledge for enhancing classifier predictions.

The AIR Software in Motion

To reveal how the AIR software is likely to be utilized in a real-world situation, think about the next instance. A notional DoD program is utilizing unmanned aerial autos (UAVs) to gather imagery, and the UAVs can begin the mission from two completely different base areas. Every location has completely different environmental circumstances related to it, reminiscent of wind pace and humidity. This system seeks to foretell mission success, outlined because the UAV efficiently buying pictures, based mostly on the beginning location, and so they have constructed a classifier to assist of their predictions. Right here, the situation variable, or X, is the bottom location.

This system could wish to perceive not simply what mission success seems to be like based mostly on which base is used, however why. Unrelated occasions could find yourself altering the worth or impression of environmental variables sufficient that the classifier efficiency begins to degrade.

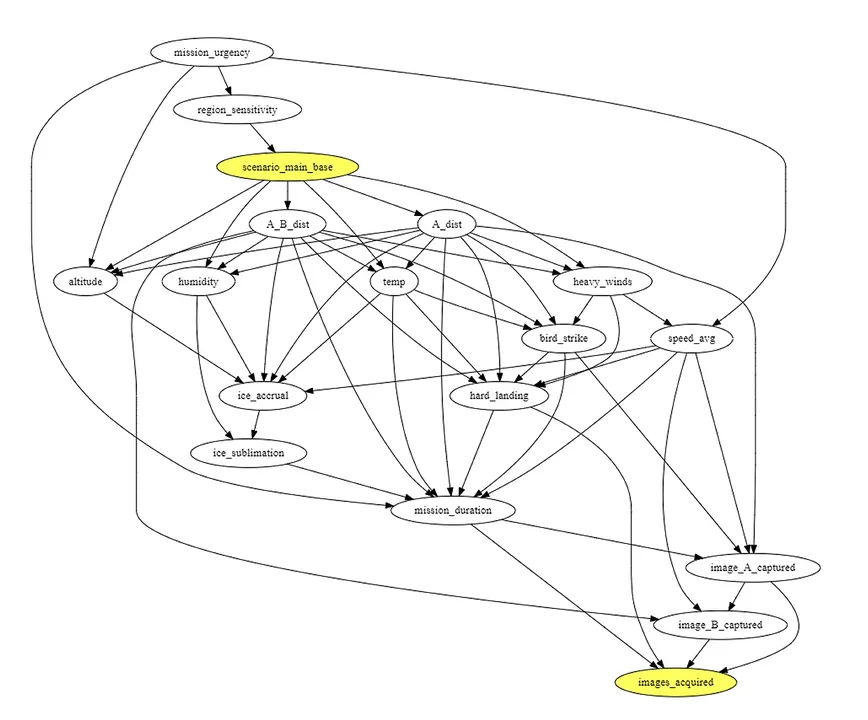

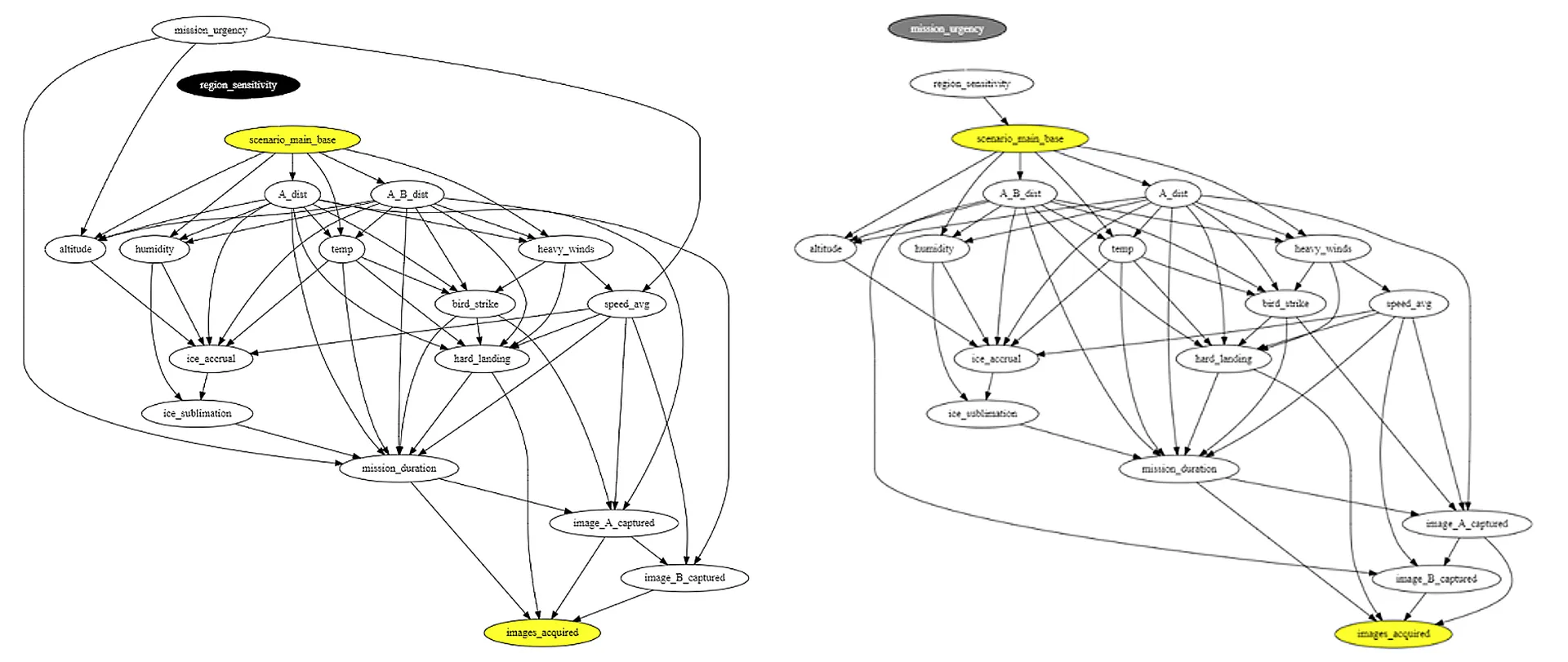

Determine 4: Causal graph of direct cause-effect relationships within the UAV instance situation.

Step one of the AIR software applies causal discovery instruments to generate a causal graph (Determine 4) of the most probably cause-and-effect relationships amongst variables. For instance, ambient temperature impacts the quantity of ice accumulation a UAV may expertise, which might have an effect on whether or not the UAV is ready to efficiently fulfill its mission of acquiring pictures.

In step 2, AIR infers two adjustment units to assist detect bias in a classifier’s predictions (Determine 5). The graph on the left is the results of controlling for the dad and mom of the primary base remedy variable. The graph to the best is the results of controlling for the dad and mom of the intermediate variables (other than different intermediate variables) reminiscent of environmental circumstances. Eradicating edges from these adjustment units removes potential confounding results, permitting AIR to characterize the impression that selecting the primary base has on mission success.

Determine 5: Causal graphs comparable to the 2 adjustment units.

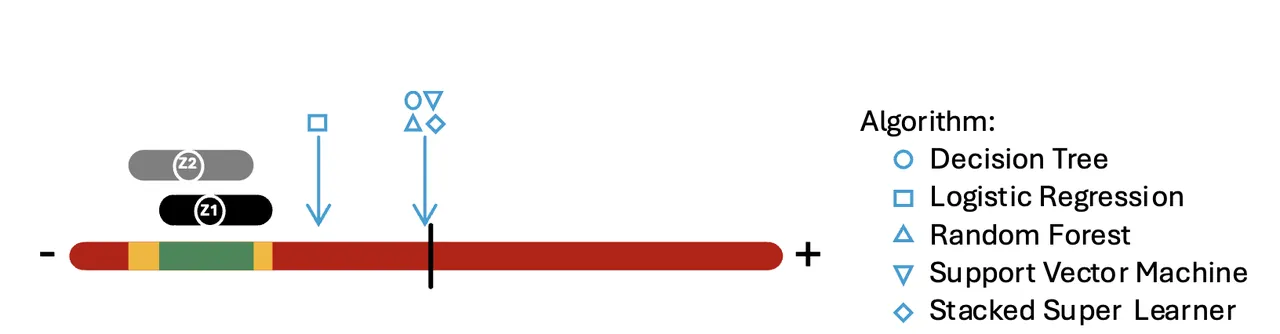

Lastly, in step 3, AIR calculates the danger distinction that the primary base selection has on mission success. This threat distinction is calculated by making use of non-parametric, doubly-robust estimators to the duty of estimating the impression that X has on Y, adjusting for every set individually. The result’s some extent estimate and a confidence vary, proven right here in Determine 6. Because the plot exhibits, the ranges for every set are related, and analysts can now examine these ranges to the classifier prediction.

Determine 6: Danger distinction plot exhibiting the common causal impact (ACE) of every adjustment set (i.e., Z1 and Z2) alongside AI/ML classifiers. The continuum ranges from -1 to 1 (left to proper) and is coloured based mostly on degree of settlement with ACE intervals.

Determine 6 represents the danger distinction related to a change within the variable, i.e., scenario_main_base. The x-axis ranges from constructive to damaging impact, the place the situation both will increase the chance of the end result or decreases it, respectively; the midpoint right here corresponds to no vital impact. Alongside the causally-derived confidence intervals, we additionally incorporate a five-point estimate of the danger distinction as realized by 5 well-liked ML algorithms—determination tree, logistic regression, random forest, stacked tremendous learner, and help vector machine. These inclusions illustrate that these issues usually are not specific to any particular ML algorithm. ML algorithms are designed to study from correlation, not the deeper causal relationships implied by the identical knowledge. The classifiers’ prediction threat variations, represented by varied gentle blue shapes, fall outdoors the AIR-calculated causal bands. This consequence signifies that these classifiers are seemingly not accounting for confounding resulting from some variables, and the AI classifier(s) needs to be re-trained with extra knowledge—on this case, representing launch from major base versus launch from one other base with a wide range of values for the variables showing within the two adjustment units. Sooner or later, the SEI plans so as to add a well being report to assist the AI classifier maintainer establish extra methods to enhance AI classifier efficiency.

Utilizing the AIR software, this system workforce on this situation now has a greater understanding of the info and extra explainable AI.

How Generalizable is the AIR Software?

The AIR software can be utilized throughout a broad vary of contexts and situations. For instance, organizations with classifiers employed to assist make enterprise selections about prognostic well being upkeep, automation, object detection, cybersecurity, intelligence gathering, simulation, and plenty of different functions could discover worth in implementing AIR.

Whereas the AIR software is generalizable to situations of curiosity from many fields, it does require a consultant knowledge set that meets present software necessities. If the underlying knowledge set is of affordable high quality and completeness (i.e., the info consists of vital causes of each remedy and final result) the software will be utilized broadly.

Alternatives to Accomplice

The AIR workforce is at present looking for collaborators to contribute to and affect the continued maturation of the AIR software. In case your group has AI or ML classifiers and subject-matter consultants to assist us perceive your knowledge, our workforce may help you construct a tailor-made implementation of the AIR software. You’ll work intently with the SEI AIR workforce, experimenting with the software to study your classifiers’ efficiency and to assist our ongoing analysis into evolution and adoption. A few of the roles that might profit from—and assist us enhance—the AIR software embrace:

- ML engineers—serving to establish check circumstances and validate the info

- knowledge engineers—creating knowledge fashions to drive causal discovery and inference levels

- high quality engineers—guaranteeing the AIR software is utilizing acceptable verification and validation strategies

- program leaders—deciphering the knowledge from the AIR software

With SEI adoption help, partnering organizations acquire in-house experience, progressive perception into causal studying, and information to enhance AI and ML classifiers.