GPUs have an insatiable want for information, and holding these processors fed generally is a problem. That’s one of many large causes that WEKA launched a brand new line of knowledge storage home equipment final week that may transfer information at as much as 18 million IOPS and serve 720 GB of knowledge per second.

The newest GPUs from Nvidia can ingest information from reminiscence at unbelievable speeds, as much as 2 TB of knowledge per second for the A100 and three.35 TB per second for the H100. This type of reminiscence bandwidth, utilizing the newest HBM3 customary, is required to coach the biggest giant language fashions (LLMs) and run different scientific workloads.

Preserving the PCI busses saturated with information is vital for using the total capability of the GPUs, and that requires a knowledge storage infrastructure that’s able to maintaining. The parents at WEKA say they’ve finished that with the brand new WEKApod line of knowledge storage home equipment it launched final week.

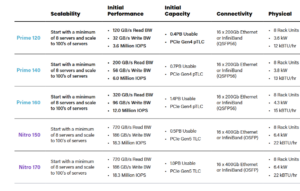

The corporate is providing two variations of the WEKApod, together with the Prime and the Nitro. Each households begin with clusters of eight rack-based servers and round half a petabyte of knowledge, and may scale to help lots of of servers and a number of petabytes of knowledge.

The Prime line of WEKApods relies on PCIe Gen4 know-how and 200Gb Ethernet or Infiniband connectors. It begins out with 3.6 million IOPS and 120 GB per second of learn throughput, and goes as much as 12 million IOPS and 320 GB of learn throughput.

The Nitro line relies on PCIe Gen5 know-how and 400Gb Ethernet or Infiniband connectors. Each the Nitro 150 and the Nitro 180 are rated at 18 million IOPS of bandwidth and may hit information learn speeds of 720 GB per second and information write speeds of 186 GB per second.

Enterprise AI workloads require excessive efficiency for each studying and writing of knowledge, says Colin Gallagher, vice chairman of product advertising and marketing for WEKA.

“A number of of our opponents have currently been claiming to be the perfect information infrastructure for AI,” Gallagher says in a video on the WEKA web site. “However to take action they selectively quote a single quantity, usually one for studying information, and depart others out. For contemporary AI workloads, one efficiency information quantity is deceptive.”

That’s as a result of, in AI information pipelines, there’s a crucial information interaction between studying and writing of knowledge because the AI workloads change, he says.

“Initially, information is ingested from numerous sources for coaching, loaded to reminiscence, preprocessed and written again out,” Gallagher says. “Throughout coaching, it’s frequently learn to replace mannequin parameters, checkpoints of assorted sizes are saved, and outcomes are written for analysis. After coaching, the mannequin generates outputs that are written for additional evaluation or use.”

The WEKAPods make the most of the WekaFS file system, the corporate’s high-speed parallel file system, which helps quite a lot of protocols. The home equipment help GPUDirect Storage (GDS), an RDMA-based protocol developed by Nvidia, to enhance bandwidth and cut back latency between the server NIC and GPU reminiscence.

WekaFS has full help for GDS and has been validated by Nvidia together with a reference structure, WEKA says. WEKApod Nitro is also licensed for Nvidia DGX SuperPOD.

WEKA says its new home equipment embody an array of enterprise options, equivalent to help for a number of protocols (FS, SMB, S3, POSIX, GDS, and CSI); encryption; backup/restoration; snapshotting; and information safety mechanisms.

For information safety particularly, it says it makes use of a patented distributed information safety coding scheme to protect in opposition to information loss brought on by server failures. The corporate says it delivers the scalability and sturdiness of erasure coding, “however with out the efficiency penalty.”

“Accelerated adoption of generative AI functions and multi-modal retrieval-augmented technology has permeated the enterprise sooner than anybody might have predicted, driving the necessity for inexpensive, highly-performant and versatile information infrastructure options that ship extraordinarily low latency, drastically cut back the price per tokens generated and may scale to fulfill the present and future wants of organizations as their AI initiatives evolve,” WEKA Chief Product Officer Nilesh Patel stated in a press launch. “WEKApod Nitro and WEKApod Prime provide unparalleled flexibility and selection whereas delivering distinctive efficiency, vitality effectivity, and worth to speed up their AI initiatives anyplace and in all places they want them to run.”

Associated Objects:

Legacy Information Architectures Holding GenAI Again, WEKA Report Finds

Hyperion To Present a Peek at Storage, File System Utilization with International Website Survey

Object and File Storage Have Merged, However Product Variations Stay, Gartner Says